This blog post presents an automated machine learning approach in Python to create a stress monitoring system with data from devices such as fitness trackers. With the rising popularity of trackers that monitor biological signals 24/7, there is only a matter of time before the technology matures and everyone will be wearing their own ‘doctor AI’ on the wrist, this project is one step in that direction.

Github repository with the full dataset and codebase can be found here. This blog post is a simplified version of my master’s dissertation done during the summer of 2017. For more information about data handling, and other machine learning approaches, please see the full masters dissertation available here.

Implementation

- Cleaning and preparing data

- Training a machine learning model

- Testing the model in a real-life scenario

Dataset for model training

The training data comes from a project conducted at MIT by Healey as a part of her PhD thesis, which consist of body measurements conducted on various young people driving in stressing environments, e.g. rush hour, highways, red lights, as well as a relaxation period to create a non-stressed base reading. The dataset is freely available from Physionet

Automated Machine learning

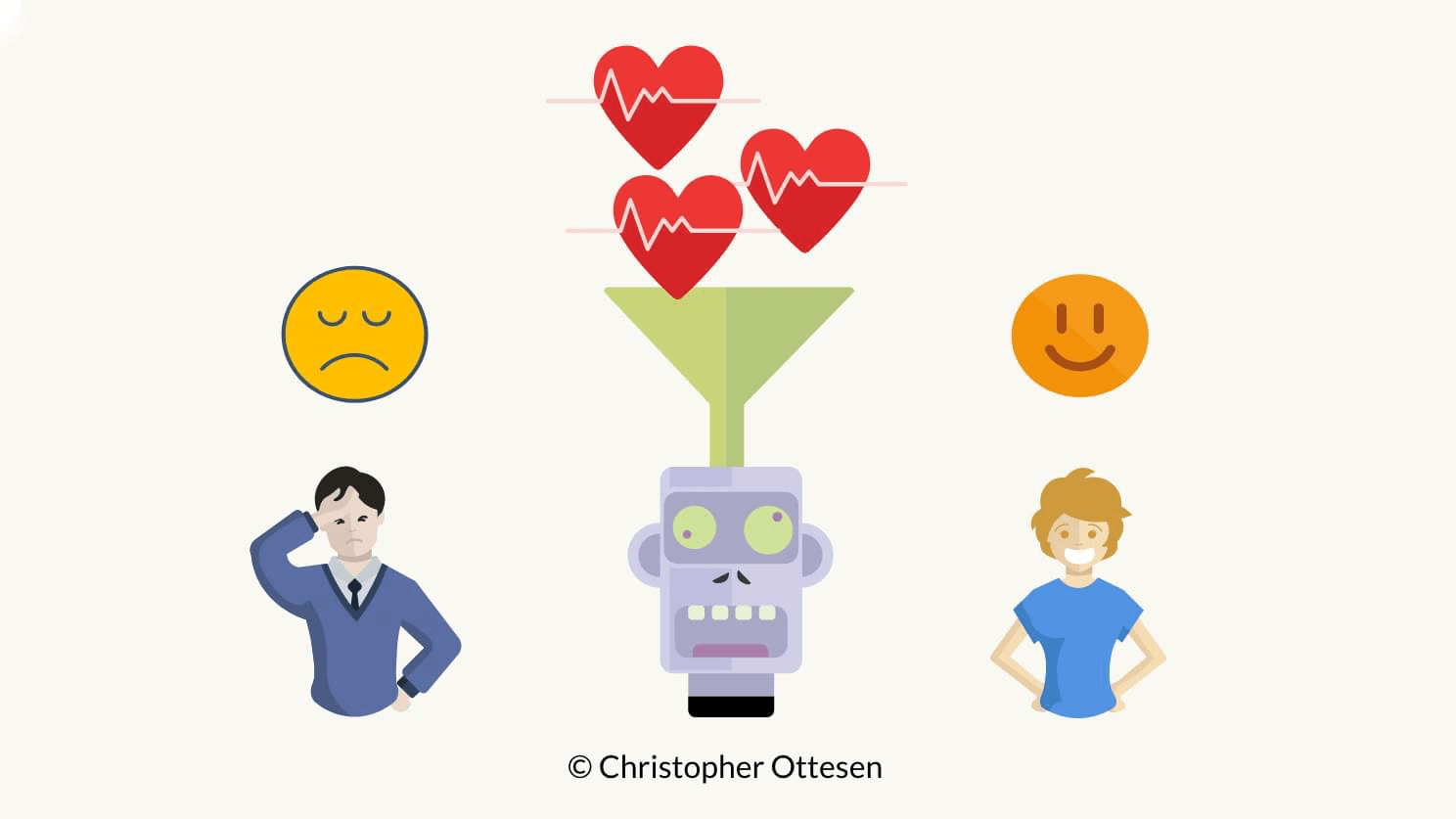

We frame the problem as a binary classification problem – stressed or unstressed, and we will use the free Automated Machine Learning Python Library TPOT to create a model that can detect stress.

Testing the model in a real-life scenario

We then use the trained model and apply it to a real-world scenario using a new data set collected with a Fitbit Charge 2 and a Polar H7 heart rate strap. We decided to monitor people watching a stress inducing horror TV series that was for sure to get the heart pounding.

Background information on biological signals related to stress

This section gives an overview of biological signals as signifiers of stress and how they can be measured with wearable devices.

Stress is one of the most frequently occurring health problems in Europe, and according to a study by Villarejo et all, one in four people that were absent from their work for more than a month were away due to stress triggered issues. The idea is to utilise advances in wearable technology to make non-invasive measures of biological signals more accurate and accessible, leading to a future where health care could be better delivered at a lower cost.

Physiological measures of stress

Stress is the body’s emotional response to a particular situation, where the body releases stress hormones such as adrenaline and cortisol which sharpen the body’s alertness and strength (Villarejo et all). Stress affects several physical processes in the autonomic nervous system (ANS) leading to increased muscle tension, change in concentration and changes in the heart rate and heart rate variability (Taelman et al).

In general, stress can be split into three different categories (Bakker et al):

- Acute stress

- Episodic acute stress

- Chronic stress

The acute stress factor is characterised by short-term arousal where the body returns to its normal state after the stress factor has passed (Bakker et al). It’s this category of stress that lies within the scope of this project.

Measuring stress with galvanic skin response

Galvanic Skin Response sensors (GSR) are commonly used to measure stress by measuring the resistance of the skin, and the more stressed a person is, the more the person sweats and resistance decreases (Villarejo et all). In research conducted by (Villarejo et all) they were able to correctly classify the persons stressed state with a success rate of almost 91% by just using Galvanic Skin Response measurements, but they note that in general settings it’s hard to differentiate between being stressed and just normal sweating from physical activity.

Measuring Heart Rate Variability

Heart rate variability (HRV) is an umbrella term for a range of features derived from the time interval between two heartbeats – referred to as RR intervals. HRV is traditionally measured with a clinical level electrocardiogram (ECG), but thanks to advances in technology it can now be measured with wearable devices such as heart rate straps and some advanced Fitness trackers. Research successfully connects heart rate variability to measures for stress, cancer monitoring, diabetes, mellitus, sleep problems, difficulties regulating emotions and more.

In this project, we’re aiming to predict stress from HRV data sourced from commercially available wearables.

The RR interval is the time interval in milliseconds between two heartbeats, and it is the measure used to derive Heart Rate Variability (Mietus and Goldberger).

The heart rate variability measure can be obtained by applying time and frequency calculations on consecutive RR samples. The most commonly used time domain measures can be found in the Time Domain Measures table below (Mietus and Goldberger), and the most commonly used frequency domain measures can be found in the Frequency Domain Measures table below (Mietus and Goldberger).

Frequency domain measures are commonly derived with the Lomb-Scagle periodogram, a variant of the Fourier Transform (Mietus and Goldberger). The Lomb algorithm is especially suited for data with uneven samples, which is in the nature of RR intervals. The generalised form of the Lomb-Scargle Periodogram equation is given by the equation below where A, B, and T are arbitrary functions of the frequency f and observation times ????????

(Vanderplas)

$$P(f)=\frac{A^{2}}{2}(\sum_{n}^{~}g_{n~}~COS(2\pi f[ t_{n~}-~T ]))^{2}+~\frac{B^{2}}{2}~(\sum_{n}^{~}g_{n~}~sin(2\pi f[t_{n~}-~T]))^{2}$$

PPG (photoplethysmography) is a commonly used sensor to measure heart rate and heart rate variability by measuring electrical signals based on light reflected from blood flow changes (Plews et al). Studies show that PPG is not as accurate as Electrocardiogram (ECG) which is considered the gold standard when measuring the heart, but it gives satisfactory results when measuring heart rate variability (Plews et al). The consumer-targeted Microsoft Band 2’s PPG sensor was compared to the performance of an ECG device in measuring RR peaks by measuring 49 students taking a memory test in front of a computer. The results show that the two devices were very consistent with each other with a coefficient of determination of 0.995 (Chudy).

| Time Domain Measures | |

|---|---|

| AVNN | Average of all NN intervals |

| SDNN | Standard deviation of all NN intervals |

| SDANN | Standard deviation of the averages of NN intervals in all 5-minute segments of a 24-hour recording |

| SDNNIDX | Mean of the standard deviations of NN intervals in all 5-minute segments of a 24-hour recording |

| rMSSD | Square root of the mean of the squares of differences between adjacent NN intervals |

| pNN50 | Percentage of differences between adjacent NN intervals that are greater than 50 ms |

| Frequency Domain Measures | |

|---|---|

| TOTPWR | Total spectral power of all NN intervals up to 0.04 Hz |

| ULF | Ultra Low Frequency – Total spectral power of all NN intervals up to 0.003 Hz |

| VLF | Very Low Frequency – Total spectral power of all NN intervals between 0.003 and 0.04 Hz |

| LF | Low Frequency – Total spectral power of all NN intervals between 0.04 and 0.15 Hz |

| HF | High Frequency – Total spectral power of all NN intervals between 0.15 and 0.4 Hz |

| LF/HF | Ratio of low to high-frequency power |

Measuring stress with heart rate variability

Changes in heart rate variability has been successfully linked to stress by several studies. For example a study conducted by (Taelman et al) identified how stress affects physiological factors in the body and how the autonomic nervous system is activated, leading to change in both heart rate and heart rate variability (Taelman et al). Hjortskov et al conducted a study in a stressing work environment where they measured the effects of mental stress on heart rate variability. Their research shows that the biological reaction to stress is visible in different components of the Lomb-Scargle spectrogram Hjortskov et al. The high frequency (HF) and the low frequency (LF) bands were significantly lower during resting periods compared to work sessions and the ratio between the LF and HF was significantly reduced during resting periods Hjortskov et al.

Dataset preparation

The dataset is in a physionet specific format divided into 18 .dat files and 18 .hea files with accompanying metadata. The data consists of signals for ECG, EMG, GSR measures from the foot, GSR measures from the hand, HR and Respiration. All values are float values, with a sampling frequency of 15.5 samples per second. To be able to work with the dataset we use a tool from Physionet called WFDB to convert the files into a comma separated .txt files with column names. Thereafter heart rate variability is derived from the RR intervals using a custom python script that calls the WFDB terminal application.

The heart rate variability is calculated from a sliding window of 20-seconds of adjacent RR interval, and ten samples of RR intervals from the previous window and five samples of RR intervals from the next window. This results in a window that overlaps in time with a total size of about 30 seconds and avoids a hard cut-off by using overlapping windows.

This approach makes it possible to include events at the end or the start of the windows, so that more RR peaks are counted in when calculating heart rate variability features, at the same time the resulting dataset will have more samples than if the window was e.g. 30 seconds without overlapping. The resulting dataset is saved to a .csv file.

| HR | interval in seconds | AVNN | RMSSD | pNN50 | TP | ULF | VLF | LF | HF | LF_HF | |

|---|---|---|---|---|---|---|---|---|---|---|---|

| count | 4129.000000 | 4129.000000 | 4129.000000 | 4129.000000 | 4129.000000 | 4129.000000 | 4129.000000 | 4129.000000 | 4129.000000 | 4129.000000 | 4129.000000 |

| mean | 81.144252 | 0.788440 | 0.788020 | 0.027223 | 0.034246 | 0.060604 | 0.057902 | 0.000728 | 0.000003 | 0.000001 | 3.555695 |

| std | 10.871620 | 0.109768 | 0.109363 | 0.016964 | 0.025153 | 0.056901 | 0.057757 | 0.003194 | 0.000030 | 0.000010 | 0.244431 |

| min | 60.558824 | 0.527944 | 0.528170 | 0.000000 | 0.000000 | 0.000008 | 0.000000 | 0.000000 | 0.000000 | 0.000000 | 0.418526 |

| 25% | 73.394737 | 0.718930 | 0.720548 | 0.013743 | 0.025641 | 0.019485 | 0.012480 | 0.000000 | 0.000000 | 0.000000 | 3.555695 |

| 50% | 78.973684 | 0.789732 | 0.790125 | 0.022889 | 0.027027 | 0.047369 | 0.035371 | 0.000000 | 0.000000 | 0.000000 | 3.555695 |

| 75% | 87.527778 | 0.869053 | 0.868135 | 0.043000 | 0.030303 | 0.100771 | 0.098881 | 0.000000 | 0.000000 | 0.000000 | 3.555695 |

| max | 115.446809 | 1.040088 | 1.038360 | 0.080981 | 0.257143 | 0.329891 | 0.329891 | 0.035841 | 0.000616 | 0.000261 | 9.117240 |

Labelling the data

The data doesn’t natively contain any direct labels for stress, and so had to be derived. This was done by measuring galvanic skin response signal from the foot, as the foot measurement looks cleaner than hand measurements (probably caused by more movement of the hands). The median of the galvanic skin response value is taken as the cut-off point to determine the stressed state, any value above the median value is labelled as stress, and any value below the median value is labelled as not stressed, and effectively framing the problem as a binary machine learning task.

Following this method, around half of the data is labelled as stressed, a labelling one can reason is quite accurate as the drivers were not stressed at all times, e.g. during the relaxation period. This way of labelling data follows the same approach used by Liu and Ulrich on the same dataset.

The figure above shows the GSR signal with stressed segments annotated in red.

The cleaned and prepared dataset can now be loaded, and is available in the project Github repository.

dataframe_hrv = pd.read_csv("dataset/dataframe_hrv.csv")

dataframe_hrv = dataframe_hrv.reset_index(drop=True)Lets take a look at the dataset.

display(dataframe_hrv.head(5))| ECG | EMG | HR | RESP | Seconds | footGSR | handGSR | interval in seconds | marker | newtime | stress | time | NNRR | AVNN | SDNN | RMSSD | pNN50 | TP | ULF | VLF | LF | HF | LF_HF | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | -0.001974 | -0.004737 | 77.815789 | 10.801842 | 12.529684 | 2.417132 | 10.889447 | 0.614632 | NaN | 12.529684 | 0.0 | 12.529684 | 0.973684 | 0.617297 | 3.558630e-02 | 0.015203 | 0.055556 | 0.001238 | 0.0 | 0.000696 | 0.000407 | 0.000135 | 3.00200 |

| 1 | 0.002935 | -0.004457 | 101.978261 | 10.750609 | 30.503500 | 2.417109 | 11.251065 | 0.647826 | NaN | 30.503500 | 0.0 | 30.503500 | 0.978261 | 0.647889 | 1.354660e-02 | 0.013858 | 0.045455 | 0.000144 | 0.0 | 0.000009 | 0.000060 | 0.000075 | 0.79371 |

| 2 | 0.006745 | -0.003426 | 104.957447 | 10.557234 | 52.523021 | 2.226872 | 11.379638 | 0.646383 | NaN | 52.523021 | 0.0 | 52.523021 | 0.978723 | 0.645000 | 2.240000e-08 | 0.000000 | 0.000000 | NaN | 0.0 | NaN | NaN | NaN | NaN |

| 3 | -0.004043 | -0.002532 | 87.702128 | 10.640128 | 74.402170 | 2.173021 | 11.470830 | 0.645000 | NaN | 74.402170 | 0.0 | 74.402170 | 0.978723 | 0.645000 | 2.240000e-08 | 0.000000 | 0.000000 | NaN | 0.0 | NaN | NaN | NaN | NaN |

| 4 | 0.012745 | -0.004426 | 88.829787 | 10.699319 | 96.219617 | 2.017106 | 11.135255 | 0.645000 | NaN | 96.219617 | 0.0 | 96.219617 | 0.978723 | 0.645000 | 2.240000e-08 | 0.000000 | 0.000000 | NaN | 0.0 | NaN | NaN | NaN | NaN |

Some further data cleaning

We’ll first make sure the labels are ints of either 1 (stressed) or 0 (relaxed).

def fix_stress_labels(df='',label_column='stress'):

df['stress'] = np.where(df['stress']>=0.5, 1, 0)

display(df["stress"].unique())

return df

dataframe_hrv = fix_stress_labels(df=dataframe_hrv)We’ll manually clean up all the rows, and fill missing values with the mean.

Note that the heart rate columns are further cleaned with a median filter, this is to make sure errors in readings are smoothed out.

def missing_values(df):

df = df.reset_index()

df = df.replace([np.inf, -np.inf], np.nan)

df[~np.isfinite(df)] = np.nan

df.plot( y=["HR"])

df['HR'].fillna((df['HR'].mean()), inplace=True)

df['HR'] = signal.medfilt(df['HR'],13)

df.plot( y=["HR"])

df=df.fillna(df.mean(),inplace=True)

return df

dataframe_hrv = missing_values(dataframe_hrv)Feature Removal and Selection

The galvanic skin response features used to label the data are removed, along with the ECG and EMG data as this information cannot be easily obtained from wearable devices targetting the consumer market. The ultra low frequency (ULF) segment is also discarded as a majority of the values are zero, which is probably due to the short time intervals. Also, the information from the very low-frequency band (VLF) band is discarded as research by Hjortskov et al has shown that the very low-frequency band proves to be an unreliable measure in readings under 5 minutes and the samples we have in the dataset is not very consistent.

The original dataset collected respiration with a strap around the chest, while some research by Meredith et al claim that it is possible to derive respiration from waveform analysis of PPG data, neither wearing a strap around the chest or deriving respiration from PPG is done in further data collection in this research, and the respiration feature is therefore discarded.

The feature set now consists of heart rate, AVNN, RR intervals, SDNN, RMSSD, pNN50, TP, LF, HF, LFHF. With a total of 4132 samples where the heart rate and the RR intervals are the mean values from the 30-second interval required to extract the heart rate variability features.

selected_x_columns = ['HR','interval in seconds','AVNN', 'RMSSD', 'pNN50', 'TP', 'ULF', 'VLF', 'LF', 'HF','LF_HF']

X = dataframe_hrv[selected_x_columns]

y = dataframe_hrv['stress']Automated machine learning and evolutionary algorithms

The machine learning model is created using TPOT, an automated machine learning tool that optimizes machine learning pipelines using genetic programming. Essentially, TPOT makes a bunch of models with different variations of algorithms and data processing, and only the “strongest” ones survive each generation. This works similar to natural selection where only the strongest species (pipeline) survives and in the end (in theory) the best possible evolution has occurred. TPOT has to run for a good while to successfully have enough time to evolve, so at this stage.

TPOT is initialized to train with five-fold cross validation based on 80% of the data, and then validates the trained model on 20% of the data.

TPOT was initialized with a population size of 100 and the number of generations was set to 400. Essentially, this means that TPOT will train 100 models for each generation it iterates through.

def do_tpot(generations=5, population_size=10,X='',y=''):

X_train, X_test, y_train, y_test = train_test_split(X, y,train_size=0.80,test_size=0.20)

tpot = TPOTClassifier(generations=generations, population_size=population_size, verbosity=2,cv=3)

tpot.fit(X_train, y_train)

print(tpot.score(X_test, y_test))

tpot.export('tpot_pipeline.py')

return tpot

tpot_classifer = do_tpot(generations=100, population_size=20,X=X,y=y)TPOT constructed a K-nearest neighbors pipeline achieving a F1 score of 0.8103 on the validation set, and 0.8060 with cross-validation during training.

Note that we ran TPOT multiple times, and in the nature of a genetic library each run resulted in slightly different scores and often with different algorithms chosen in the pipeline, but the scores were consistent around F1 0.80.

Although the highest performance was achieved by the KNN model, it should be noted that due to the amount of storage needed (all the data) and inference complexity (O(ndk) for a simple implementation) it might not be suited in a real-life scenario on a wearable sensor.

Testing the trained model in a real-life scenario

To test the model in a real-life scenario, we did a small-scale qualitative assessment – a fancy excuse to watch some horror movies to provoke stress.

The plan

- Monitor biological signals

- Gather accelerometer data from a Fitbit Charge 2

- Gather heart rate readings from the Fitbit Charge 2

- Gather RR intervals with a Polar H7 Chest Strap

- Watch a stress provoking TV show

- See if the previously trained model can detect the stressing scenes

Several studies use horror movies to provoke mental stress and by using a Polar H7 and a Fitbit Charge 2 we’ll sit back with a cup of tea (actually we won’t, as the hot tea might affect our results) and watch something horrendous on the TV. Before starting we’ll first lay down and relax for a few minutes to get a base reading. Thereafter a short stroll will provoke some physical stress that the accelerometer in the Fitbit (hopefully) will annotate, before finally putting on the video clip.

We are using the Fitbit’s accelerometer to differantiate between stress caused by movement and physiological stress caused by eg horrible scenes on the screen. This is necessary as it’s normal that the heart rate changes when the body is doing physical work, but it shouldn’t change too much when, for example, sitting or lying down.

The RR interval data was gathered with the Elite HRV iPhone app connected to a chest strap, as the Fitbit device does not give access to individual RR peaks, just minute-to-minute heart rate readings. The data from the two devices were then merged, where the accelerometer data is in the form of steps, while minute-to-minute heartbeat is used from the Fitbit. The step data allows tagging RR peaks as a precaution to avoid misclassification as a result of physical activity raising the heart rate and lowering the RR intervals.

Head over to this blog post to read more about collecting HRV data with the EliteHRV app. DataEspresso: Reading RR intervals from EliteHRV with Pandas

Provoking Stress

The test in this experiment is setup as follows:

- A five-minute relaxation period (base reading)

- A 15-minute walk

- Descend six-floors downstairs

- A short walk along a busy road, ending in the same place

- Climb up the 6 floors

- A five-minute relaxation period

- Watch a horror movie

The physical movement of the test is supposed to lower the RR intervals and raise the heart rate creating physical stress so that the model can differentiate between physical stress and mental stress by using the accelerometer available in the Fitbit device. The walk is followed by a rest period of 5 minutes lying down, to allow the heart to recover from the physical load.

To induce an emotional stress, we showed the subjects either an episode from the TV show Vikings, or an episode from American Horror Story. The intention is that only violent scenes will impact the RR intervals and cause short-term stress. The two series were chosen to ensure it was a content that the subjects had not watched before.

The stress test was performed on five males and three females in the age range of the early twenties to mid-thirties, which are comparable demographics to the training data created by Healey in her experiments.

Of the eight subjects participating in the experiment, five were shown an episode from the TV series Vikings, and three were shown an episode from the horror series American Horror Story.

Vikings

The HBO series Vikings is a lousily-historical based TV-show that follows the old Norsemen’s conquest of Great Britain. The show has several horrible scenes with battles, human sacrifice, slaughters and more that should create an impact on the nervous system and provoke some stress.

American Horror Story

The TV series American Horror Story consists of several seasons, where each session is separate ‘mini-series’. We watched season two, based around an old dreadful Asylum that certainly should create an impact on the nervous system and provoke some mental stress.

Annotating Stress

The subjects had never been exposed to the particular episode played but are familiar with the TV show(s). The subject was told to mentally log the scenes that could cause stress and after the session write down parts of the clip where stress might have occurred. The subjective notes are compared with the areas of the reading annotated as stress and compared to the scene occurring during the period of the classified stressed moments.

Although not perfect, this approach allows us to approximately localize the time periods where emotional stress was observed.

Results from real-world test

The monitoring system was applied on eight young adults following the test describe above. One thing to note with this test is that what is perceived as stressing is very subjective and different people have a different level of tolerance before their heart rate variability is affected by what was happening on the screen. Therefore, this test asked the subjects to mentally note when they felt a certain scene was stressing. In this way, their response can be compared with the readings retrospectively.

The RR intervals of each sample were plotted and the segments marked as stress from the model was annotated in red, whereas the segments during movement registered from the Fitbit device were marked in green.

The data gathered is freely available in the project GitHub repository, but note that the files are unlabeled.

def plotFitBitReading(dfnewHRV='', predictor = "none",selected_x_columns=''):

dfnewHRV = missing_values(dfnewHRV)

dfnewPol = dfnewHRV[selected_x_columns].fillna(0)

print(dfnewPol.columns)

print(dfnewPol.shape)

pred = predictor.predict_proba(dfnewPol)

dfpred = pd.DataFrame(pred)

dfpred.columns = [["FALSE","TRUE"]]

dfpred['stress'] = np.where(dfpred["TRUE"] > 0.5, 1, np.nan)

dfnewHRV["stress"] = dfpred["stress"]

dfnewHRV.loc[dfnewHRV["steps"] > 0, 'stress'] = np.nan

#mark is to mark the RR peaks as stress

dfnewHRV.loc[dfnewHRV["stress"] == 1, 'stress'] = dfnewHRV['interval in seconds']

dfnewHRV.loc[dfnewHRV["steps"] > 0, 'moving'] = dfnewHRV['interval in seconds']

dfnewHRV["minutes"] = (dfnewHRV['newtime']/60)/1000

from itertools import cycle, islice

my_colors = list(islice(cycle(['b', 'r', 'y', 'k']), None, len(dfnewHRV)))

plot = dfnewHRV.plot(x="minutes", y=['interval in seconds',"stress", "moving"],color=my_colors)

fig = plot.get_figure()

input_df = pd.read_csv('path to reading')

plotFitBitReading(input_df,tpot_classifer,selected_x_columns)Results from readings

Head over to the GitHub repository to see more readings and play around with the datasets yourself.1

Conclusions

This project presents a machine learning approach for detecting stress factors in heart rate variability measurements on data collected from wearable devices.

These results demonstrate that heart rate variability is a reasonable metric for detecting stress and that the automatic machine learning library did a good job in finding optimal parameters.

When testing the models on data collected from wearable sensors and taking advantage of detecting movement with the accelerometer, the model could often correctly classify subjects as stressed or not, down to the minute by using heart rate, heart rate variability and raw RR intervals as features. However, sometimes it has difficulties to take into account individual differences in heart rate variability – which is most likely due to the limited training set and subjective mental reactions to violent scenes.

Our small-scale study demonstrates that the current consumer technology paired with machine learning techniques has the potential to provide a self-monitoring system that can detect abnormalities in the nervous system and successfully classify stress.

Key findings

Tagging physical stress with Accelerometer data

Accelerometer data helps the tagging accuracy of physical stress caused by movement so that these segments don’t get misinterpreted by the algorithm as mental stress.

Training AutoML models

With labelled data, models optimized through automated machine learning can successfully learn from the data and apply it to real-world data.Nevertheless, scenario-based evaluation in real-world applications remains challenging due to the lack of labelled data. To this end, we have used subjective assessment based on the subject’s verbal feedback regarding their stress level.

The results demonstrate the usability of the combination of wearable sensors and accelerometer to detect stress in the real world.

Further Work

Creating a more extensive dataset

The dataset presented in this project is relatively small, and the model presented is trained on data from more extensive equipment than simple wearable devices.

The next sensible step would be to collect data from wrist worn or similar devices, and data being labelled using GSR sensors, for instance. Additionally, it would be useful to collect information about the subjects’ movement directly and make it available as part of the training data set.

Deep Learning

We limited ourself to traditional algorithms, and one reason is the limited amount of training data. With more data, we would like to see how an LSTM perform. The LSTM will be able to take into account previous time steps in the reading and might be more generalisable against eg. individual biological responses.

Transfer Learning

Transfer learning can be used to extend a base Neural Net to detect stress and then a final layer is added to account for individual differences in heart rate variability which is subject-specific. Although this approach requires more training data, especially those from the target subject, it has the potential to improve the model accuracy further.

Examine different biological processes for stress

This project mainly focuses on heart rate variability, but it would be interesting to know what biological processes measurable with wearable devices could be good predictors for stress. Both different heart rate variability measures and other signals and transformations, for example, galvanic skin response are commonly used measures of stress; and should be explored thoroughly.

Credits

This blog post is a simplified version of my master’s dissertation done during the summer of 2017. For more information about data handling, and other machine learning approaches, please see the full masters dissertation available here.

Co-authors:

- Dr.Tony Stockman, my project supervisor.

- Norman Poh, a colleague at that have overseen the experiment and provided useful feedback.

Awesome, thank you for the tutorial and intro to heart rate variability. This post is a great 101 on the heart.

Good day! I simply wish to offer you a big thumbs up for the excellent info you’ve got right here on this post. I will be coming back to your blog for more soon.